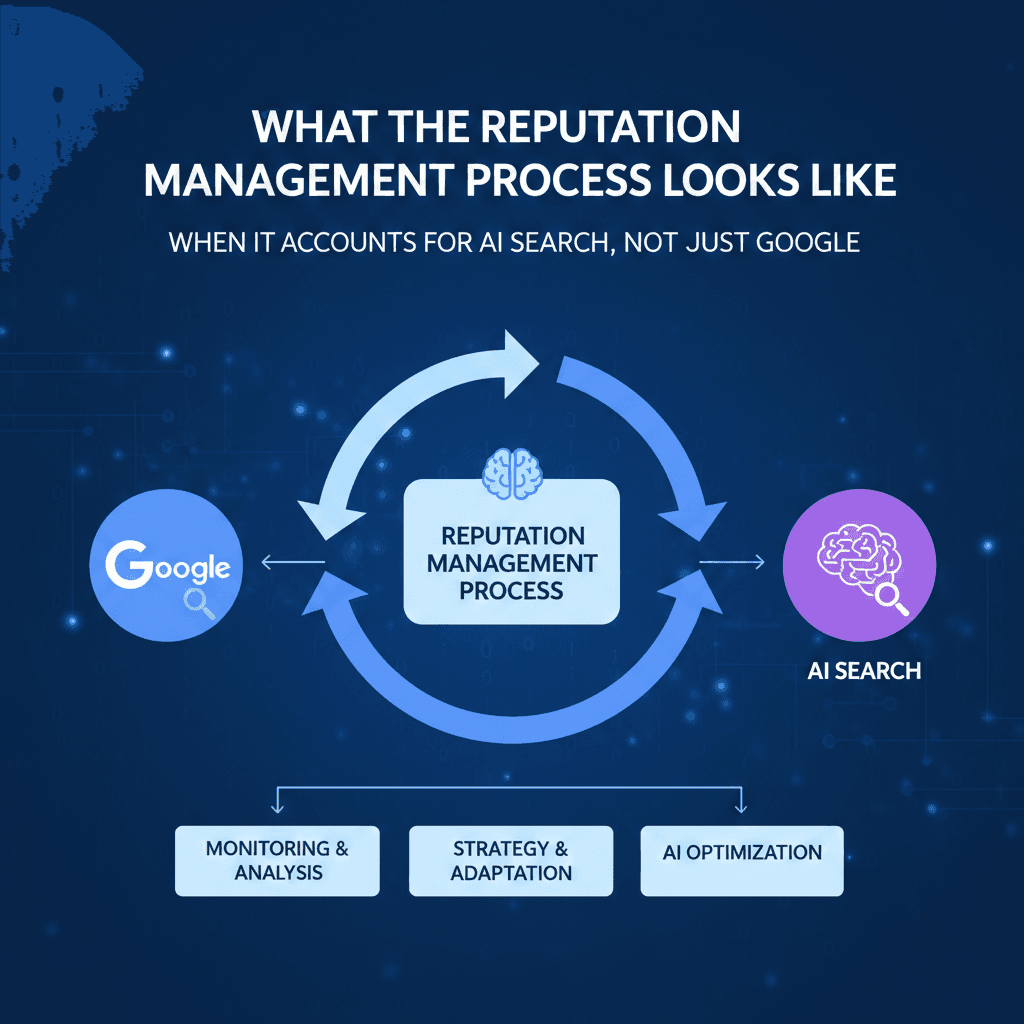

What the Reputation Management Process Looks Like When It Accounts for AI Search, Not Just Google

What the Reputation Management Process Looks Like When It Accounts for AI Search, Not Just Google

Article by

Milo

ESL Content Coordinator & Educator

ESL Content Coordinator & Educator

All Posts

Most reputation management processes were built around Google. Track rankings, suppress negative search results, generate reviews, and monitor brand mentions. That framework still matters. But it now accounts for only part of the picture, because a growing share of how people encounter information about brands happens through AI-generated answers in ChatGPT, Perplexity, Gemini, and similar tools.

These platforms don't return ten blue links. They synthesize information, cite selective sources, and sometimes fabricate details with confidence. A brand that ranks well on Google can still be misrepresented or hallucinated entirely in AI search results. The reputation management process needs to account for both environments.

Here's what that looks like across seven phases.

Modern Teaching Handbook

Master modern education with the all-in-one resource for educators. Get your free copy now!

Modern Teaching Handbook

Master modern education with the all-in-one resource for educators. Get your free copy now!

Table of Contents

How AI Search Differs from Traditional Google Results

AI search engines generate synthesized paragraph-style responses drawn from entity recognition, knowledge graphs, and E-E-A-T signals rather than keyword matching and backlinks. The result format, ranking logic, and failure modes are fundamentally different from those of traditional SERPs.

Feature | AI Search (Perplexity, ChatGPT, Gemini) | Google Traditional SERPs |

Result Format | Synthesized paragraphs | Ten ranked links |

Click Behavior | High zero-click rates | Multiple clickable results |

Ranking Basis | Entity recognition, E-E-A-T | Backlinks, keywords |

Query Handling | Conversational, long-tail | Short-tail keywords |

The practical implication is that being excluded from an AI-generated answer is a visibility problem that SEO alone can't solve. A hotel chain with strong Google rankings might lose prominence entirely when AI search pulls from a knowledge panel that hasn't been updated or verified. Managing that requires a different set of tactics than managing a SERP ranking.

Phase 1: Audit Your Brand Across AI Platforms, Not Just Search

The first phase of a modern reputation management process is a baseline audit across at least seven platforms: Google SGE, ChatGPT, Perplexity AI, Bing Chat, Claude, You.com, and traditional SERPs. Most reputation audits stop at Google. That omission leaves significant blind spots.

The audit process runs as follows:

Test 50 brand queries across all seven platforms and document AI responses verbatim

Screenshot knowledge panels and export data to a tracking spreadsheet

Score entity sentiment on a 1 to 10 scale per platform

Map which sources each platform cites for brand-related answers

Flag any hallucinated facts for correction

Budget approximately 45 minutes for the initial query sweep and an additional hour for documentation. After the initial audit, a 15-minute daily monitoring routine, checking top queries on each engine, and logging response changes, keeps the data current.

AI Chatbot Query Testing Protocol

Test 25 conversational queries per brand across ChatGPT-4o, Claude, Gemini, and Perplexity. Use five query types as your framework:

"Tell me about [brand]"

"Compare [brand] vs [competitor]"

"Recent news about [brand]"

"[Brand] customer reviews?"

"[Brand] controversies?"

Score each response for accuracy on a 1 to 5 scale and note any hallucinated details specifically. A Delta Airlines audit found that three of five AI engines hallucinated elements of the company's 2023 safety record. That kind of finding shapes where corrective content effort should be focused.

Query | Engine | Accuracy Score | Hallucinations |

Tell me about [brand] | ChatGPT-4o | 4/5 | None |

[Brand] controversies? | Claude | 2/5 | Yes, fact error |

Compare [brand] vs [competitor] | Gemini | 5/5 | None |

Phase 2: Identify AI-Specific Reputation Risks

AI search introduces five reputation risks that don't exist in traditional search. The reason these risks are distinct is that generative AI can produce confident, well-formatted misinformation without any malicious source driving it. Traditional search surfaces what exists. AI search can generate what doesn't.

The five risks are:

Hallucinated facts: AI fabricates events, statistics, or incidents with no basis in reality

Training data bias: Older or disproportionately negative training data skews AI responses

Zero-context citations: AI cites sources that don't actually support the claim being made

Personalization volatility: AI responses vary by user, making consistent brand representation difficult

Zero-click damage: Negative AI-generated summaries reach users who never visit a source to verify

Rate each risk by likelihood and impact to prioritize your response effort. Hallucinations and zero-click damage typically score highest because both are common and both cause harm before anyone can intervene.

Hallucination Types and How to Detect Them

LLM hallucinations in brand contexts fall into four categories: fabricated events (a lawsuit or data breach that didn't happen), wrong statistics (altered complaint or revenue figures), false attributions (quotes or actions credited to the wrong person), and mixed-entity confusion (blending your brand with a similarly named competitor).

Detection requires cross-checking each AI-cited source directly, verifying dates and numerical claims against public records, and testing the same query across multiple AI engines to see whether the hallucination is consistent or isolated. One brand successfully corrected persistent hallucinations within 90 days by publishing corrective microsites with structured data that gave AI engines accurate, citable source material.

Phase 3: Create Content Optimized for AI Indexing

AI search engines cite authoritative sources. The reputation management process must therefore include a content strategy that meets the standards those engines use to determine authority. The twelve E-E-A-T signals that most consistently influence AI citation include:

Expert authors with 10 or more years of documented experience and verifiable credentials

Original research based on first-party data from 500 or more sources

Schema markup covering FAQ, HowTo, and Product content types

A verified Wikidata entry for the brand entity

A DBpedia entry linked to structured data

Citations from .edu or .gov domains

Video content with full, timestamped transcripts

Podcast show notes with speaker bios and key quotes

Interview series featuring multiple named industry contributors

First-party sentiment analysis datasets

Backlinks from domains with high authority scores

Content refreshed on at least a 90-day cycle

Seven content types perform particularly well for AI discoverability: expert-authored research studies, schema-rich FAQ pages, first-party data sets, Wikidata and DBpedia entity pages, video transcripts, podcast show notes, and executive interview series. Each one targets a different entry point into AI knowledge graphs.

Publishing Calendar for AI-Optimized Content

Structure a 90-day publishing calendar as follows:

Weeks 1 to 4: Expert-authored studies and FAQ schema pages

Weeks 5 to 8: First-party datasets and Wikidata entity submissions

Weeks 9 to 12: Video content, podcast episodes, and interview series

Every piece should include a verified author byline with credentials, original data or analysis, schema markup, and Wikidata links. This combination gives AI engines the structured, attributable content they need to cite your brand accurately.

Phase 4: Distribute Across the Full PESO Framework

Distribution for AI reputation management follows the PESO model, covering Paid, Earned, Shared, and Owned channels across 50+ platforms. The reason breadth matters is that AI engines draw from diverse sources. Concentration in a single channel creates gaps in entity coverage that competing narratives can fill.

PESO Type | Example Platforms | Key Tactics |

Owned | Website, YouTube, Podcast | Optimized content with schema markup |

Paid | LinkedIn Ads, Reddit Ads | Long-tail keyword targeting for conversational search |

Earned | HARO, Wikipedia | Pitch responses for knowledge panel mentions |

Shared | Quora, Reddit | Brand-relevant answers to build E-E-A-T signals |

A 90-day distribution target of 300 or more placements breaks down into weekly milestones. Weeks 1 to 4 focus on setting up owned and shared channels. Weeks 5 to 8 shift to paid amplification and HARO outreach. Weeks 9 to 12 prioritize earned media and Wikipedia editing.

Automation tools significantly reduce manual workload. Zapier connects publishing workflows across platforms. Buffer handles scheduling for Reddit and Quora. The HARO Chrome extension sends real-time query alerts so pitch responses go out while the opportunity is still open.

Phase 5: Deploy a Real-Time Monitoring Stack

A complete AI-aware monitoring stack covers seven tools. NetReputation's published guidance on AI search monitoring reflects the same principle: tracking brand mentions in traditional search is necessary but not sufficient when AI-generated answers reach users who never click through to source material.

Tool | Monthly Cost | AI Coverage | Alert Speed |

Brand24 | $99 | High (ChatGPT, Perplexity) | Real-time |

Mention | $29 | Medium (Bing AI, SGE) | Minutes |

Google Alerts | Free | Low (Google AI Overviews) | Hourly |

SEMrush | $120 | High (AI SERP) | Real-time |

Ahrefs Alerts | $99 | Medium (Generative AI) | Daily |

Perplexity API | Usage-based | High (Perplexity AI) | Instant |

ChatGPT Plugin | Free tier | High (ChatGPT search) | On-query |

Configure Brand24 with 100 brand-related keywords, including variations for reviews and complaints. Set up Mention with Boolean strings like "brandname" AND (scam OR fraud). Configure SEMrush for AI SERP tracking on your primary branded terms. Run a daily five-minute alert triage: high-sentiment alerts first, then AI-specific mentions.

Building an AI Response Tracking Dashboard

Build a Google Data Studio dashboard tracking 12 KPIs across all monitored AI engines:

Positive to negative ratio

Citation frequency per engine

Hallucination rate (manual audit)

Knowledge panel accuracy

Sentiment score

Share of voice vs. competitors

Entity salience in knowledge graphs

Topical authority signals

Review volume and velocity

Backlink changes

Domain rating shifts

Crisis flags

Set automated alerts for any drop below a 70% positive ratio or any spike in hallucination frequency. A weekly reporting template covering KPI trends, sentiment shift graphs, and prioritized action items reduces reporting time from four hours to under 30 minutes.

Phase 6: Suppress Negative AI Narratives

Negative narratives in AI search require active suppression, not just passive content creation. The five tactics ranked by effectiveness are: high-domain-authority content that outranks negative sources, Wikipedia neutralization for balanced factual entries, review platform dominance at 4.8 stars or above, expert byline placement in niche publications, and YouTube video optimization.

One brand shifted a damaging narrative from position one to position seven in AI search results within 47 days using a combination of these tactics. The 60-day execution timeline runs as follows:

Days 1 to 15: Audit and plan using Brand24 and sentiment analysis tools; identify specific suppression targets

Days 16 to 30: Publish high-DA content and begin Wikipedia editing; launch review generation campaigns

Days 31 to 45: Secure expert bylines and optimize video content for search; monitor share of voice

Days 46 to 60: Refine schema markup and backlink strategy; track real-time SERP position changes

FTC compliance applies throughout. All review generation must use authentic customer feedback without incentives that create disclosure obligations. Wikipedia edits must cite reliable, verifiable sources. Expert bylines must disclose affiliations. Document everything for transparency if any tactic is later questioned.

Phase 7: Measure Results and Iterate Quarterly

The eight KPIs that matter most for an AI-aware reputation management process are:

AI citation share, targeting 25% or more growth from baseline

Sentiment score, targeting a range of 4.2 to 4.7

Hallucination rate, targeting a reduction from 12% to 3% or below

Knowledge panel accuracy, targeting improvement from 60% to 90%

Share of voice versus primary competitors

Review generation impact across Yelp, Trustpilot, and Google

E-E-A-T alignment score across published content

Crisis response time in hours

For ROI calculation, compare monthly monitoring costs against the revenue exposure from poor AI search representation. A brand investing $12,000 per month in monitoring and content against $250,000 in potential lost sales from consistently negative AI outputs is generating a 20x return on risk mitigation. Track savings quarterly by logging incidents that were caught and corrected before reaching significant audience exposure.

Quarterly Iteration Framework

The quarterly cycle runs in four stages: Audit, Analyze, Adjust, and Deploy.

Audit covers AI search, Google search, and knowledge graph accuracy. Analyze review KPI trends using tools like MonkeyLearn and Brand24, focusing on declining share of voice or hallucination spikes. Adjust strategies based on findings, whether that means updating structured data, refreshing content, or shifting the focus of review generation. Deploy changes and run a fresh audit 30 days later to measure impact before the next full quarterly review.

The brands that manage their reputation effectively across AI search aren't doing something radically different from traditional ORM. They're doing it more systematically, across more platforms, with measurement frameworks designed for how AI engines actually source and present information.

How AI Search Differs from Traditional Google Results

AI search engines generate synthesized paragraph-style responses drawn from entity recognition, knowledge graphs, and E-E-A-T signals rather than keyword matching and backlinks. The result format, ranking logic, and failure modes are fundamentally different from those of traditional SERPs.

Feature | AI Search (Perplexity, ChatGPT, Gemini) | Google Traditional SERPs |

Result Format | Synthesized paragraphs | Ten ranked links |

Click Behavior | High zero-click rates | Multiple clickable results |

Ranking Basis | Entity recognition, E-E-A-T | Backlinks, keywords |

Query Handling | Conversational, long-tail | Short-tail keywords |

The practical implication is that being excluded from an AI-generated answer is a visibility problem that SEO alone can't solve. A hotel chain with strong Google rankings might lose prominence entirely when AI search pulls from a knowledge panel that hasn't been updated or verified. Managing that requires a different set of tactics than managing a SERP ranking.

Phase 1: Audit Your Brand Across AI Platforms, Not Just Search

The first phase of a modern reputation management process is a baseline audit across at least seven platforms: Google SGE, ChatGPT, Perplexity AI, Bing Chat, Claude, You.com, and traditional SERPs. Most reputation audits stop at Google. That omission leaves significant blind spots.

The audit process runs as follows:

Test 50 brand queries across all seven platforms and document AI responses verbatim

Screenshot knowledge panels and export data to a tracking spreadsheet

Score entity sentiment on a 1 to 10 scale per platform

Map which sources each platform cites for brand-related answers

Flag any hallucinated facts for correction

Budget approximately 45 minutes for the initial query sweep and an additional hour for documentation. After the initial audit, a 15-minute daily monitoring routine, checking top queries on each engine, and logging response changes, keeps the data current.

AI Chatbot Query Testing Protocol

Test 25 conversational queries per brand across ChatGPT-4o, Claude, Gemini, and Perplexity. Use five query types as your framework:

"Tell me about [brand]"

"Compare [brand] vs [competitor]"

"Recent news about [brand]"

"[Brand] customer reviews?"

"[Brand] controversies?"

Score each response for accuracy on a 1 to 5 scale and note any hallucinated details specifically. A Delta Airlines audit found that three of five AI engines hallucinated elements of the company's 2023 safety record. That kind of finding shapes where corrective content effort should be focused.

Query | Engine | Accuracy Score | Hallucinations |

Tell me about [brand] | ChatGPT-4o | 4/5 | None |

[Brand] controversies? | Claude | 2/5 | Yes, fact error |

Compare [brand] vs [competitor] | Gemini | 5/5 | None |

Phase 2: Identify AI-Specific Reputation Risks

AI search introduces five reputation risks that don't exist in traditional search. The reason these risks are distinct is that generative AI can produce confident, well-formatted misinformation without any malicious source driving it. Traditional search surfaces what exists. AI search can generate what doesn't.

The five risks are:

Hallucinated facts: AI fabricates events, statistics, or incidents with no basis in reality

Training data bias: Older or disproportionately negative training data skews AI responses

Zero-context citations: AI cites sources that don't actually support the claim being made

Personalization volatility: AI responses vary by user, making consistent brand representation difficult

Zero-click damage: Negative AI-generated summaries reach users who never visit a source to verify

Rate each risk by likelihood and impact to prioritize your response effort. Hallucinations and zero-click damage typically score highest because both are common and both cause harm before anyone can intervene.

Hallucination Types and How to Detect Them

LLM hallucinations in brand contexts fall into four categories: fabricated events (a lawsuit or data breach that didn't happen), wrong statistics (altered complaint or revenue figures), false attributions (quotes or actions credited to the wrong person), and mixed-entity confusion (blending your brand with a similarly named competitor).

Detection requires cross-checking each AI-cited source directly, verifying dates and numerical claims against public records, and testing the same query across multiple AI engines to see whether the hallucination is consistent or isolated. One brand successfully corrected persistent hallucinations within 90 days by publishing corrective microsites with structured data that gave AI engines accurate, citable source material.

Phase 3: Create Content Optimized for AI Indexing

AI search engines cite authoritative sources. The reputation management process must therefore include a content strategy that meets the standards those engines use to determine authority. The twelve E-E-A-T signals that most consistently influence AI citation include:

Expert authors with 10 or more years of documented experience and verifiable credentials

Original research based on first-party data from 500 or more sources

Schema markup covering FAQ, HowTo, and Product content types

A verified Wikidata entry for the brand entity

A DBpedia entry linked to structured data

Citations from .edu or .gov domains

Video content with full, timestamped transcripts

Podcast show notes with speaker bios and key quotes

Interview series featuring multiple named industry contributors

First-party sentiment analysis datasets

Backlinks from domains with high authority scores

Content refreshed on at least a 90-day cycle

Seven content types perform particularly well for AI discoverability: expert-authored research studies, schema-rich FAQ pages, first-party data sets, Wikidata and DBpedia entity pages, video transcripts, podcast show notes, and executive interview series. Each one targets a different entry point into AI knowledge graphs.

Publishing Calendar for AI-Optimized Content

Structure a 90-day publishing calendar as follows:

Weeks 1 to 4: Expert-authored studies and FAQ schema pages

Weeks 5 to 8: First-party datasets and Wikidata entity submissions

Weeks 9 to 12: Video content, podcast episodes, and interview series

Every piece should include a verified author byline with credentials, original data or analysis, schema markup, and Wikidata links. This combination gives AI engines the structured, attributable content they need to cite your brand accurately.

Phase 4: Distribute Across the Full PESO Framework

Distribution for AI reputation management follows the PESO model, covering Paid, Earned, Shared, and Owned channels across 50+ platforms. The reason breadth matters is that AI engines draw from diverse sources. Concentration in a single channel creates gaps in entity coverage that competing narratives can fill.

PESO Type | Example Platforms | Key Tactics |

Owned | Website, YouTube, Podcast | Optimized content with schema markup |

Paid | LinkedIn Ads, Reddit Ads | Long-tail keyword targeting for conversational search |

Earned | HARO, Wikipedia | Pitch responses for knowledge panel mentions |

Shared | Quora, Reddit | Brand-relevant answers to build E-E-A-T signals |

A 90-day distribution target of 300 or more placements breaks down into weekly milestones. Weeks 1 to 4 focus on setting up owned and shared channels. Weeks 5 to 8 shift to paid amplification and HARO outreach. Weeks 9 to 12 prioritize earned media and Wikipedia editing.

Automation tools significantly reduce manual workload. Zapier connects publishing workflows across platforms. Buffer handles scheduling for Reddit and Quora. The HARO Chrome extension sends real-time query alerts so pitch responses go out while the opportunity is still open.

Phase 5: Deploy a Real-Time Monitoring Stack

A complete AI-aware monitoring stack covers seven tools. NetReputation's published guidance on AI search monitoring reflects the same principle: tracking brand mentions in traditional search is necessary but not sufficient when AI-generated answers reach users who never click through to source material.

Tool | Monthly Cost | AI Coverage | Alert Speed |

Brand24 | $99 | High (ChatGPT, Perplexity) | Real-time |

Mention | $29 | Medium (Bing AI, SGE) | Minutes |

Google Alerts | Free | Low (Google AI Overviews) | Hourly |

SEMrush | $120 | High (AI SERP) | Real-time |

Ahrefs Alerts | $99 | Medium (Generative AI) | Daily |

Perplexity API | Usage-based | High (Perplexity AI) | Instant |

ChatGPT Plugin | Free tier | High (ChatGPT search) | On-query |

Configure Brand24 with 100 brand-related keywords, including variations for reviews and complaints. Set up Mention with Boolean strings like "brandname" AND (scam OR fraud). Configure SEMrush for AI SERP tracking on your primary branded terms. Run a daily five-minute alert triage: high-sentiment alerts first, then AI-specific mentions.

Building an AI Response Tracking Dashboard

Build a Google Data Studio dashboard tracking 12 KPIs across all monitored AI engines:

Positive to negative ratio

Citation frequency per engine

Hallucination rate (manual audit)

Knowledge panel accuracy

Sentiment score

Share of voice vs. competitors

Entity salience in knowledge graphs

Topical authority signals

Review volume and velocity

Backlink changes

Domain rating shifts

Crisis flags

Set automated alerts for any drop below a 70% positive ratio or any spike in hallucination frequency. A weekly reporting template covering KPI trends, sentiment shift graphs, and prioritized action items reduces reporting time from four hours to under 30 minutes.

Phase 6: Suppress Negative AI Narratives

Negative narratives in AI search require active suppression, not just passive content creation. The five tactics ranked by effectiveness are: high-domain-authority content that outranks negative sources, Wikipedia neutralization for balanced factual entries, review platform dominance at 4.8 stars or above, expert byline placement in niche publications, and YouTube video optimization.

One brand shifted a damaging narrative from position one to position seven in AI search results within 47 days using a combination of these tactics. The 60-day execution timeline runs as follows:

Days 1 to 15: Audit and plan using Brand24 and sentiment analysis tools; identify specific suppression targets

Days 16 to 30: Publish high-DA content and begin Wikipedia editing; launch review generation campaigns

Days 31 to 45: Secure expert bylines and optimize video content for search; monitor share of voice

Days 46 to 60: Refine schema markup and backlink strategy; track real-time SERP position changes

FTC compliance applies throughout. All review generation must use authentic customer feedback without incentives that create disclosure obligations. Wikipedia edits must cite reliable, verifiable sources. Expert bylines must disclose affiliations. Document everything for transparency if any tactic is later questioned.

Phase 7: Measure Results and Iterate Quarterly

The eight KPIs that matter most for an AI-aware reputation management process are:

AI citation share, targeting 25% or more growth from baseline

Sentiment score, targeting a range of 4.2 to 4.7

Hallucination rate, targeting a reduction from 12% to 3% or below

Knowledge panel accuracy, targeting improvement from 60% to 90%

Share of voice versus primary competitors

Review generation impact across Yelp, Trustpilot, and Google

E-E-A-T alignment score across published content

Crisis response time in hours

For ROI calculation, compare monthly monitoring costs against the revenue exposure from poor AI search representation. A brand investing $12,000 per month in monitoring and content against $250,000 in potential lost sales from consistently negative AI outputs is generating a 20x return on risk mitigation. Track savings quarterly by logging incidents that were caught and corrected before reaching significant audience exposure.

Quarterly Iteration Framework

The quarterly cycle runs in four stages: Audit, Analyze, Adjust, and Deploy.

Audit covers AI search, Google search, and knowledge graph accuracy. Analyze review KPI trends using tools like MonkeyLearn and Brand24, focusing on declining share of voice or hallucination spikes. Adjust strategies based on findings, whether that means updating structured data, refreshing content, or shifting the focus of review generation. Deploy changes and run a fresh audit 30 days later to measure impact before the next full quarterly review.

The brands that manage their reputation effectively across AI search aren't doing something radically different from traditional ORM. They're doing it more systematically, across more platforms, with measurement frameworks designed for how AI engines actually source and present information.

Modern Teaching Handbook

Master modern education with the all-in-one resource for educators. Get your free copy now!

Modern Teaching Handbook

Master modern education with the all-in-one resource for educators. Get your free copy now!

Table of Contents

Modern Teaching Handbook

Master modern education with the all-in-one resource for educators. Get your free copy now!

2025 Notion4Teachers. All Rights Reserved.

2025 Notion4Teachers. All Rights Reserved.

2025 Notion4Teachers. All Rights Reserved.